Translating Data into AI Skills

HeavybitHeavybit

HeavybitHeavybit

How We Went from LLMs to Agents to Skills

In late 2025, Anthropic declared skills, modular, reusable, file-based instructions that encapsulate domain expertise, to be an open standard. Since then, hundreds of thousands of users have reportedly installed a variety of skill.md files to improve their agents’ abilities on a variety of tasks, including coding with specialized languages or performing non-coding tasks like spinning up documentation or creating datasets.

While performing his usual daily duties with Claude Code, Turkish developer Yusuf Karaaslan began experimenting with skills, and eventually ending up designing the Skill Seekers project, which processes and translates a variety of data sources (including GitHub repos, PDFs, videos, and others) into agentic skills. Below, he explains what led him to create the project and how thousands of users worldwide are finding value from agentic skills.

From Context Limits to Skill Libraries

Karaaslan, a game developer by trade, notes that his initial foray into working with skills came from trying to work with the back-end of the popular online game storefront Steam. He started with Claude's official skill-creator skill, feeding it every relevant link from Steam's documentation one by one and asking it to produce a complete skill for the inventory system — the goal being a direct answer instead of a guess. It didn't work.

"No matter what I did, Claude would skip most of the input or cut it down far too aggressively, and it kept guessing at what I was asking for. My read was that because I'd asked it to handle both the heavy data gathering and the processing in one shot, it was optimizing for cost and cutting corners on the part that mattered most. Even the cut-down output was a little more confident than nothing, which was the clue. So I sat down and started building a separate system to do the heavy data lifting, the part that doesn't actually need AI in the loop. That was the first prototype of Skill Seekers."

The contrast once he piped that prototype's output back into Claude was hard to miss. "Before, the answers were always hedged. 'Maybe try this, maybe try that.' After I added the Skill Seekers output to the context, the tone changed completely: 'You can't do it the way you want with Steam inventory, you need an external backend.' Much more confident, much more direct, and actually correct. Even when I challenged it on details, it stayed true to Steam's actual behavior. The funny part is that all this happened the same day Anthropic announced skills. The first version of Skill Seekers shipped that same night.”

From there, Karaaslan kept iterating on the prototype, prompting Claude Code to extend it with web scraping and working around internal context limits until each new data source slotted in cleanly. "Until a certain point, my focus was on feeding in as many data sources as possible to build a richer, more complex skill: The need to download a dynamic website, or the need to scrape a codebase. Once I felt we'd covered almost all the major data sources and some of the more niche ones, I started backtracking to improve the system architecture. At that point I hit Claude's internal limits again: This time around analyzing the whole codebase. So I fell back on my usual trick: I had Claude generate full class diagrams, package diagrams, and flow diagrams for the key systems. With those UMLs in hand, I could pinpoint exact pain points, architectural problems, and more. From there, I designed the new architecture end-to-end and replaced the live version module by module.”

The developer’s iterative approach led him to map all connections and flows within the project, and eventually unify everything with a single interface, ensuring key functions were connected and removing duplicate branches. He explains that he relies on three primary skills himself for design, implementation, and PR reviews. "I actually developed these skills side by side with Skill Seekers itself, based on my own observations along the way. And I just open-sourced my AI workflows too.”

“Using my design skill, I start by using a card that simply explains test-driven development, and from that, I generate UML diagrams of how that should work. I then review, accept changes, and create issues and tasks, then create a development report of any issues. After everything is complete, I will send the output to the PR review skill. In this way, I have full control over the project.”

Accelerating AI Learning by Modeling Human Learning

Karaaslan suggests that his approach building skills started in much the same way he, himself, learns new things. “Before using AI, [to learn new development topics] I would read documentation, take notes, and learn from example projects. At first, I mimicked this process.”

The developer took a similar approach to eventually making Skill Seekers a project capable of ingesting data from a variety of different formats. “To implement how I learn things by watching videos into Skill Seekers, I would get code from the screen, collect timelines, and copy transcripts and screenshots into Skill Seekers. I was just mimicking how I learn without AI.”

The developer notes that after the release of the project, he observed how others would use it, noting that users would want to review new additions. “People would want to adjust some stuff, add some stuff, remove some stuff. I wanted to automate the process, so I created a skill for that, and afterwards, we created the workflows, and gave the skill to that workflow. Exactly what we would do manually. It’s about mimicking, optimizing, and automating.”

What Skills Add for Developers

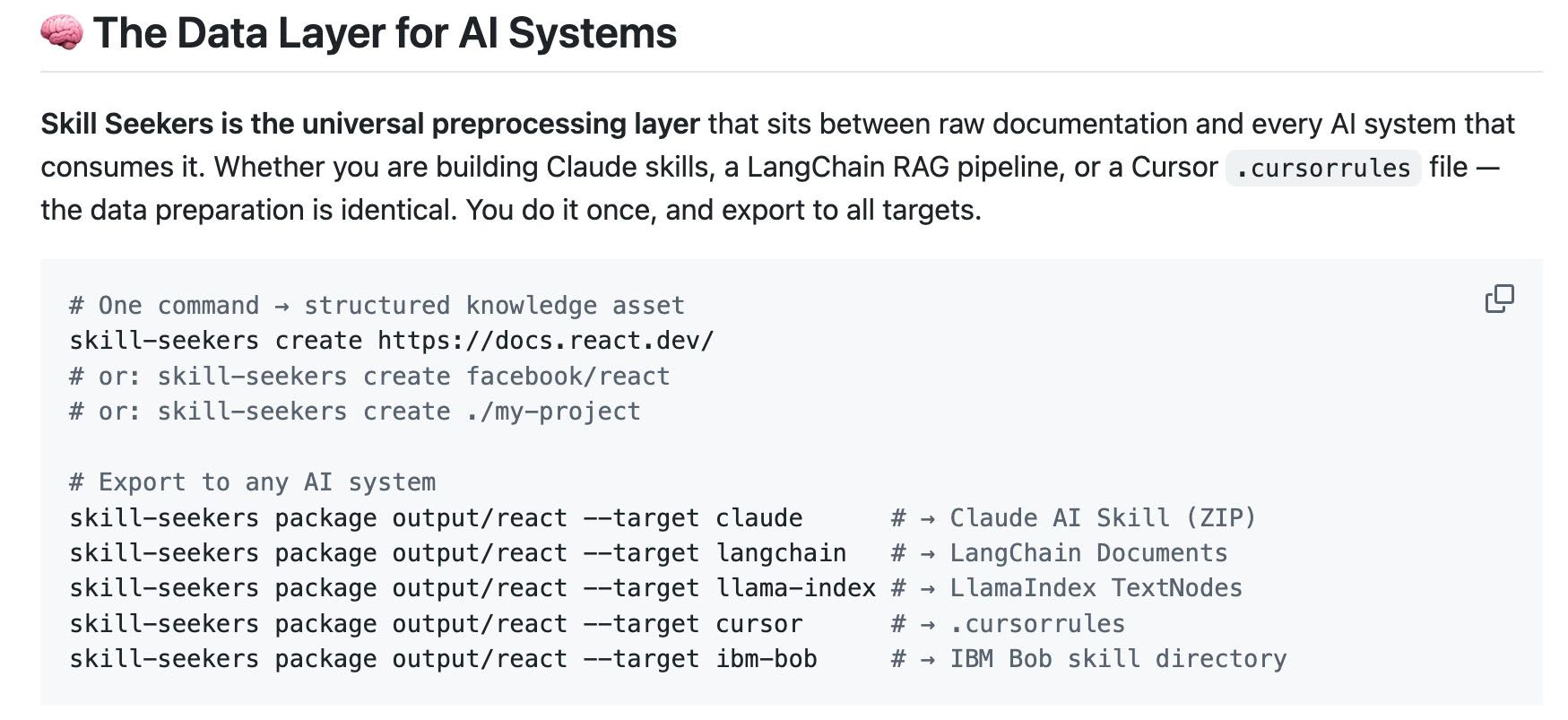

Karaaslan suggests a variety of development use cases have emerged from the project. “It started as a helper, but it has become a data management system for any kind of input that can be structured and reproduced, and with minimal cost because once you create a new skill config file, you can run that config again and again with a single command-line prompt, or just by telling Claude to do it directly via MCP.”

The developer lists a variety of use cases, including an integration partner that uses the project to power its marketing and helper bots with skills that ping codebases to answer user questions about them. An academic AI research startup uses the project to scan new research papers for usable skills that the team can then use internally.

“Like I said, it's all just data that AI can understand. You can update to new versions easily and connect to your codebase in one click. So you can create your own tools, your own skills from your codebase. You can give it official documentation, your own implementation, examples, maybe some video, and it all gets merged into one big skill that includes everything.”

“After you create skills, you can add a workflow to create an agent and system calls. I use that feature often because you can control the context. It's actually a helpful tool for context engineering.”

“In my workflows I have an automated skill that's triggered every time I push to the main repo. Every time I want to do design work I have a ‘design repos’ skill that I create with Skill Seekers focusing on the architecture and API. Whenever I run my agent skill, it automatically uploads that skill, and every time I run it, I know I have the latest version of my architecture as knowledge within the context inside the agent.”

How to Think About Skills for Beginners

“Let's say you’re not very fond of AI, and you just want to do your work as a developer. Developers always think in terms of architecture, how we do stuff, how we structure stuff. If you want to use some new back-end framework or a new language you’re not experienced with, you can stay focused on system architecture while Skill Seekers builds a skill that turns your agent into an expert on your tech stack. The best part is that Skill Seekers has an MCP, so you can just casually tell Claude to create skills for your project. No extra steps.”

“Let’s say we wanted to start a new project and it's in an area we’re not familiar with. We might want to do some light experimentation before building because the cost of learning seems too high. Previously, we might avoid focusing too much on learning entirely new frameworks and languages. We would tend to just stay with what we know now.”

“You can tell Skill Seekers, ‘I want to do this project, I want to use this technology, I want to try this new framework, I want to try this new library,’ in casual, natural language. The MCP will trigger and generate the skill for the latest, top-of-the-line skill for you. You can just say, ‘I have an idea’ or ‘I want to try this functionality of this language or this library,’ and you start writing anything that you can test.”

"It's not too different from being an engineering lead. You say, 'I have a vision. I want to do this thing,' and it creates a team for you that knows that field and executes on your vision. You have a problem, you have a solution, you have a tool, you have a limitation, you have an output. This is always the same."

Real-World Use Cases for Skills

"The biggest use case I see is builders using skills to power support for their GitHub repos across the different language versions they need to maintain. Each version has its own skill, and every time they push an update, an automatic trigger refreshes the bot's knowledge. Doing that by hand is incredibly time-consuming."

Karaaslan's most-used example comes from inside his own company, where the team builds with Unity. He started by setting up an internal skill marketplace so developers could share work with each other, then began authoring skill config files for the technologies the team uses day-to-day. It didn't take long to spot the bottleneck. "Even with Skill Seekers doing the hard part, the surrounding loop was repetitive: Create a config, generate a skill, package it, and manually upload it to the marketplace. The friction was in everything around the generation step."

The developer explains his process of layering in fixes, one at a time. "First, I added a shared config repo so anyone on the team could publish or pull a config through their own Skill Seekers install. Then, I added packaging and uploading to the marketplace as native functionality inside Skill Seekers itself. The flow worked end-to-end, but I hit a new wall. The volume of data I was sending Claude during the local enhancement step burned through my usage almost immediately."

The fix was to stop tying Skill Seekers to any single model. "I made it agent-, CLI-, and LLM-agnostic. Now I trigger the whole pipeline from the command line: Scrape, restructure, combine sources, run the local AI enhancement step on whichever model I want, package, and push straight to our internal marketplace. I tend to use OpenCode with Kimi for the enhancement step. I tend to get more usage with it, and in my own tests, the skill quality came out on par with Claude."

That decoupling is also where Karaaslan sees the project's longer-term direction. "My vision for Skill Seekers is for it to become a universal data layer for AI systems. Model-agnostic, source-agnostic, pipeline-friendly. Whatever the input, whatever the agent on the other end, the skill is the same shape. That's the point."

Content from the Library

Four HR Mistakes Founders Still Make in the Age of AI

HR Mistakes That Founders Still Make Despite the accelerating influence of AI, there are still four key errors that new startup...

How Visualization Helps Unblock the AI Coding Bottleneck

So, AI Generated Lots of Code. What Does any of it Do? For years, the all-consuming measurement of effective software...

What's Missing to Make AI Agents Mainstream?

2025 was to be the year of AI agents, a prediction that may or may not have come true, depending on which people you ask. For a...