Ep. #90, Outcome Engineering in the AI Era with Cory Ondrejka

On episode 90 of o11ycast, Ken Rimple and Jessica “Jess” Kerr speak with Cory Ondrejka. Together, they unpack the rise of agentic AI, the shifting identity of software engineers, and the growing importance of measuring real-world impact. Cory shares his concept of Outcome Engineering and how teams can adapt to a world where building is fast but validation is everything.

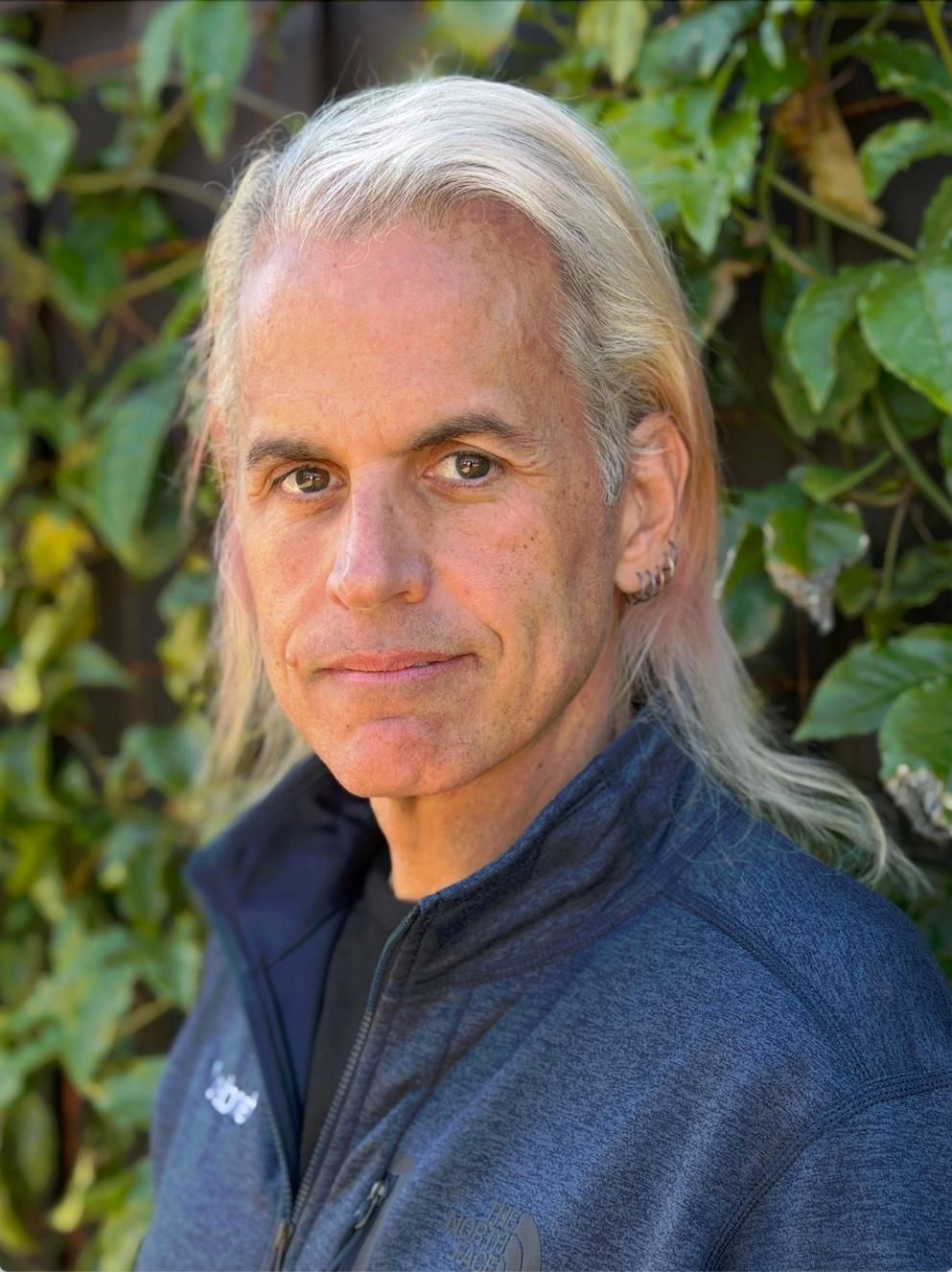

Cory Ondrejka is the CTO of Onebrief and a longtime technology leader with decades of experience building products and platforms. He has held leadership roles across the industry, including at Google and SmartNews, and is the author of the Outcome Engineering manifesto. Cory focuses on how AI and agentic systems are transforming software development and product innovation.

transcript

Cory Ondrejka: My morning AI reading now is what Outcome Engineering (o16g), the website, is off finding every day. And there's just so much happening in AI every freaking day right now. There is just no possible way to stay on top of it.

And since the last company I worked at, we were building news products, I had a pretty good idea of how I would want to stay on top of AI going forward. And it was a good test case for like, where is agentic right now and how good is it?

Because one of our really big discoveries back at SmartNews was back in March of 2025 was when pure agentic data processing flows basically passed conventional clustering and standard machine learning flows, in terms of how effectively and cheaply you could get to ranking and clustering and everything you want to do with really, really heterogeneous data.

So it's kind of a piece of cake to prompt an AI with, "as a technology reader in the Bay Area interested in these things, how would you rank these? How would you score these?"

And so in addition to using o16g as a place to put up the manifesto it was also a place to start hanging off a bunch of Cloudflare workflows and start from sort of the obvious set of AI RSS feeds. And then every time you find an interesting story that scored well, go see if that author themselves has a blog and Atom or RSS feed, add it to the list. And away we go. And it's been spidering out happily ever since.

Jessica "Jess" Kerr: So you wrote your own personal news aggregator? I mean, you wrote it with yourself in mind.

Cory: If by "you" you mean Claude Code did, sure. Haha. And in fact the first version of it-- So I was on the east coast when I wrote the manifesto and I was on a flight home and had the thought, well, this site would be kind of more interesting if it also had some useful aggregation on it or maybe found top stories or something like that.

So I'm getting on the plane and I've got Claude Code Web open on my phone. And so I'm like, well, check out this and here's what I want to do. And proceeded on my phone to write the entire first version of it on the flight with Claude Code Web. And it pretty much worked out of the box.

And the blog was already a Cloudflare page, so I was sort of leaning in the, you know, the workers or workflow direction to begin with. But Claude was like, "yep, happy to go do it that way. It's pretty straightforward."

And you know what's nice is all of the major frontier models at this point have both API and REST APIs. And so it's so easy to hit them from workflows. And workflows are basically built for, you know, mildly asynchronous workflows. And so you combine that with, I think the sleaziest thing, which it does, which is GitHub as database.

Jess: Haha!

Ken Rimple: Haha, I'm going to quote that one. That's great.

Jess: Not weird. Not weird.

Cory: It's not that it's weird, it's just that it's sleazy. Right? Because GitHub has done so much engineering work to make it low latency and why not? And since, okay, so you've now found something, so you add it to the content directory, which then triggers a redeployment.

Jess: Right.

Cory: And there you go.

Jess: Great. So you've written your own AI news aggregator and deployed it on your website. And we're going to get to today's headline in the news aggregator, which is super relevant to ol1ycast. But first I need you to introduce yourself and tell us about o16g.

Cory: Sure. So I'm Cory Ondrejka. I'm the CTO of Onebrief and a few months back, having been working really heavily with Agentic, both from a coding perspective and a product perspective pretty much since we started hearing about the modern version of it at Google, it just started becoming very, very clear that what my profession is, because for the last 30 plus years I have been paid to write code and build products, wasn't going to be the same profession real soon now.

Like not 10 years from now, not five years from now, but we can probably quibble whether it's, you know, nine months plus or minus three or six months plus or minus two.

Jess: It will vary.

Cory: It will vary. It will vary based on the kind of code you're writing. And whether you just like writing artisanal code because that's what you enjoy doing.

But if you are paid to deliver products or technology or services, your world has probably already changed.

And I think we had all sort of felt that first November and then January updates to the frontier models--

Jess: Was that November 2025 and January 2026?

Cory: Yeah.

Jess: Yes. Total turning point.

Cory: Just these moments where you're-- Because if we go back to February of 2025, those were the first moments of like, oh, it could do something and this is adorable and--

Jess: Oh this is useful, within very specific parameters.

Cory: Oh, a hundred percent. And you started spotting the first, "oh wait, this engineer actually used it" because there are just some identifiable quirks. And you know, particularly in TypeScript, Claude Code was sort of identifiable early on because it just felt very strongly about certain access patterns. And you're like, well, okay, let's all--

Jess: The em dash of TypeScript? Haha.

Cory: Pretty much. And that's fine. There's probably more TypeScript and JavaScript in the corpus than almost anything at this point. So great. But it certainly wasn't fire and forget and it certainly wasn't a huge accelerant yet.

And then Thanksgiving happened and then. "Huh, yeah, that's actually pretty, pretty useful for a wide range of cases."

And then by January and it was aligned with starting a new role at Onebrief and coming into an existing engineering team and thinking a lot about, well, where is AI right now and what should we be doing? And I had written a sort of AI table stakes post which was, it seems like if you're not doing AI to spot at least gaps in your test coverage and if you somewhat relevant for this podcast, like if you weren't using Observability anyway, like, why aren't you using AI to help make sure all of your code is in some way shape or form observable?

But beyond that, it sure looked like if you were doing web development, the frontier models could do most of that code. It might not do it exactly how you would do it and it might occasionally go wildly off the rails. But for the most part, if you're doing like a React website? Come on. Like it clearly--

Jess: Yeah. Why are you typing?

Cory: Why are you typing?

Jess: I also agree with you that it hid in at a point around December, January where I felt like it is irresponsible to code without using AI. Like you said, at least to check your work, at least to look at the rest of the code base and see what changes you missed.

Cory: Well, or think about onboarding, why don't you have code? You know, whether it's Claude Code or one of the other frontier models, really exploring your code base to talk about, well, what do all these different modules do? And oh by the way, where are the undercovered parts of the code that maybe there be dragons? Which is super useful to know as a new engineer coming in.

And so had written that and had been having those discussions while meeting a new team. And anytime you come in in a new senior role, it's always this very exciting and delicate set of first conversations because everybody's trying to figure out who the hell you are. And, you know, everybody assumes you're just going to come in and do all these changes.

And my approach when arriving somewhere is to go on that structured listening tour and ask a lot of questions. And what was so interesting was how nervous everyone was about AI. And it wasn't just nervousness about capabilities, but nervousness about identity.

Jess: Yes, who am I now?

Cory: Who am I now? And for a lot of us, again, not to fully articulate how long I've been doing this, but having done this when writing code wasn't cool, I at least got to go through that period.

If your career spans 24 years, software engineering has been the baller job your entire career. It has been the most exciting, highest compensated, most amazing thing you could be doing. And you have been on the cutting edge of everything changing in the world for that entire period.

And unlike if you were in manufacturing or if you were in many other fields that the robots and AI have come for over the last 15, 20 or 30 or 40 years, this is a pretty new experience if you are a software engineer. So given that people don't make great decisions when they are scared or when they are worried about their identity, I was sitting in a hotel room late at night writing, because I tend to do long form writing to think about things.

And at the same time, I literally had sort of two documents going at the same time. And one was, what is this profession going forward? And then the other one was, okay, based on what we know now, what's the dream architecture? What's the architecture we could get to for agentic if we really are trying to give all of us a dark factory of agentic superpowers anytime we want to produce a product or code or technology?

And so I had these two really, really rough docs, literally dumped them both into Gemini and said, "go do deep research on everything on the web that that touches any of this." And Gemini, I've found it to be pretty good at that type of research. And what was interesting is the first sentence it came back with was, "you're really talking about outcomes."

I'm like, oh, yeah, I agree with that because I've always been, and you can talk to plenty of folks at Honeycomb who I've worked with over the years-- Short term goals and outcomes is how I manage. Like, what did we get done this week? What did we say we were going to get done this week?

And for real, not, "we worked on something this week." No, no, no, no, no. What, what did we do? What was the outcome? What were the goals?

Jess: What is different in the world?

Cory: That's exactly right. And so outcome really jumped out at me. And so I started just playing with what-- Does outcome engineering work? And then because I love being way too cute for my own good, it's like, wait, o16g is available as a dot com. And so there we go.

And that first Gemini query had found 11 themes. And again, being too cute for my own good, I'm like, well, what about 16 themes?

Jess: Haha. That's why it's so long.

Cory: Exactly. And from there, six hours of rewriting every word until 4 in the morning was where the first version of it came from.

Jess: And it is this Outcome Engineering Manifesto.

Cory: The manifesto, yeah.

Jess: at o16g.com.

Cory: Correct. And is it possible to redefine what it means to be a software engineer in a world where we don't write code?

Jess: Right, because you compared it to manufacturing. Like if you're a welder and here come these robots that can weld, who are you? But the thing is, if we consider ourselves coders, and here come these robots who can code, then who are we?

But if we consider ourselves as software engineers who deliver useful software, who add capabilities to the world, then, well, we just add capabilities to the world with the robots. We are operating the robots. There's not that same conflict.

Cory: Except talking to a lot of software engineers, there was clearly a conflict. It is like the many, many, many ranty hacker news arguments about does typing speed matter? Right?

Jess: Haha!

Cory: Which happens all the time, like clockwork.

Jess: Since I don't read the-- Oh my gosh.

Cory: Yeah, well, that's healthy. Have the robots read it for you.

Jess: Now we have robots to do that.

Cory: Exactly and having worked with great coders who are fast typists, two finger typists, really slow typists, like, pretty sure that's not the gating aspect to it, but clearly-- And look, we have all had that moment of, "oh, I wrote this piece of code that I think worked, and it's awesome." And it had some algorithmic quirk to it that is super cool and neat.

Like, those are amazing moments, and they're amazing moments that make this career so much fun. But at the same time, for the vast majority of development, that activity is going to get replaced. And so that was why to me, picking a different name, if nothing else, helped me think about it differently and helped me try to frame it differently.

Jess: So it helps to think of it as a different job.

Cory: Correct. And the other piece is, I think it expands the aperture of who can be an outcome engineer.

Jess: Nice.

Cory: Because if you are a designer or a PM or a TPM or an artist or a chef or a writer--

Jess: Oh, yeah, yeah. Like Rick, our events coordinator. Right? He sets up the booths at conferences and now he's writing programs for these little Raspberry PI badges to turn them into games at the booth.

Cory: No, that's exactly right.

Ken: Yeah. I keep using this analogy, and it's really old and it's really tired, but it's like before printing presses, you had the monks scribing everything and you got to get a monk to write down the new copy of the book. Right? And now it's like, well, I can go to a printing press and then eventually you have desktop publishing and you can do it yourself.

And, you know, there's all these technical jumps that happen that your job gets redefined. I think the challenge for a lot of people might be they feel that creativity is being taken away. Their ownership, which we all eventually learn, code ownership should be shared to a degree mostly. A lot of people who feel scared feel like that's the one thing I had degrees of freedom in that I loved. And I think that's some of the challenge.

Cory: Look, this is a profession where you had a lot of power because you are writing the magic that changes the world. You were the wizard or priest, depending upon your framing, who knows the incantations. And you had the superpower to stand up in front of your pointy haired boss and spout technobabble at them and ensure that no matter what they were telling you to do, you could kind of still go do whatever you wanted to go do.

Jess and Ken: Haha!

And there is a narrow interpretation of that, that that might not actually be the optimal outcome for products, companies, customers, or anyone other than you. But there's certainly plenty of engineers who really relish that. And I think code ownership itself is a very complex question because on the one hand, deep domain knowledge is incredibly valuable. And single points of failure are just stupid.

So how do you navigate those two things? And look this has been my life's work is to produce technology code products while technology changes around us, to entertain, to connect, to amaze. It's what I do.

I had a short digression where I ran marketing for EMI Music, which means I got to work with rock stars. And as another friend once pointed out to me, and I now fully agree with:

When you look for prima donnas in the universe, there are no prima donnas in the universe like software engineers.

Jess: Really?

Cory: Oh, 100% like rock stars have nothing on them. And so this isn't a good or bad thing. It is simply when you've had a 20 year run, 30 year run of being the center of things, it's pretty glorious and it's a lot of fun. And I think that creativity and control question, I think really splits people as they think about the next steps.

And to my interpretation, look, I've been a manager, a director, a VP, a CTO. I've already had to be creative through discussion, through outcomes, through goal setting.

Jess: Oh through something other than code.

Cory: Something other than code. I've coded almost every day in every job I've ever had because I'm the world's worst artist. And so I am much faster coding something to show people than I am to sketch something.

And so it doesn't mean it's production code, but it's just how I, if I have an idea that's often the, the fastest way I can share it. And I love that about code. It's what was amazing back on Commodore PETs and Apple IIs, right? You could get them to do something and it was like, wow, this is--

Jess: Code is an expressive medium.

Cory: Yeah, that's exactly right. And so the question is, how does that feel if you are helping a team do something amazing where you get to just be blown away by their creativity and their ability to do something amazing. And who cares where the credit accrues because if you're really lucky, you plus the team did something that changed the world, that mattered and hopefully that's the goal.

And so when I am saying tongue in cheek that engineers are primadonnas, it's usually the interaction between engineers and either their managers or some non engineering function within the company.

Jess: Oh, so less among each other than externally facing or--

Cory: Well, I mean other than everybody can agree to be mean to like SRE, right, like there are other--

Jess: Oh no!

Cory: No, no, like again, these are all-- In game companies, it was always game designers versus the engineers versus the actual designers. Every profession has these internal splits where, who's really responsible for the outcome, right? And everybody, everybody wants to take their piece of that.

And AI really changes that dynamic because what does it mean when anyone can come into the room with an amazing working demo or maybe an app that's sitting up on the web somewhere where they're like, yeah, this thing, can we do something more like this? And we haven't had that experience before.

And to me I think that is a gloriously awesome expansion of what has been an incredibly exciting profession. Because why wouldn't we want more people to get to experience that? Why wouldn't we want more people to be able to take that idea that's been rattling around in their head that they've wanted to exist in the world where before it was, well, I need to go find an engineer, I need to go find a designer, I need to go find some team to go do this.

And going back to the genesis here, part of the imagined version of what would this combination of people and agents look like in a sustainable long term way that could produce incredibly creative things and could produce incredibly reliable things and could produce incredibly capable--

Jess: Is this your dream architecture?

Cory: If you actually read the 16 items, it's not just a manifesto, it's also an architecture.

Jess: Okay, okay, that makes sense.

Cory: Right. And so it's why it is elements like, you know, no more single player, because it's neither just you, it's not just an agent, but it's also probably not just one person and one agent either.

Ken: This is where you're getting into context. And so one of the things you have in the manifesto is if you don't know where you stand, you cannot calculate the path, destination. Right? So part of this is I guess democratizing context, getting out of engineers heads to some degree and having the designers and the business people and such contribute their context to everything. Right?

Cory: Absolutely. And you know, going back to all the way from whether you're just a solo entrepreneur to you're somebody working at a humongous hyperscaler, you know, ultimately-- So maybe you're doing art, which is totally awesome and fantastic and so that you're doing for yourself, maybe the audience isn't actually as important to you.

If you're trying to do a business, at some point, what you are building, the audience you're building it for the goals of your company, your business, your mission-- That's ultimately what you're serving. That's ultimately what is directing. And so how do you create structure, agents, and process such that if you're now getting way more ideas, you better have some way to be ranking them, evaluating them, talking about that they actually make the product better?

Wait, that means you have to be able to define what better is. That means you need to be able to measure what better is. Back at Google, we had really fierce debates about one of the hardest discussions in software engineering, which is how productive are we? Are we really succeeding? And the notion of rate of delivery of positive change became the strongest framing of that we could come up with.

Because it seems like such a simple phrase, but it's kind of embedding the iron price in a bunch of places. Right? Because positive change means. Do you know what positive change is? Can you measure it? Can you prove it?

Delivery-- Did what you change actually get used by your customer? how much did it get used? Are you measuring how often your deployments aren't just successful deployments and not disasters, but actually generated the positive change you hypothesized?

And that framing, to me, aligns with outcomes in this very, very direct way. And you'll see it as a theme throughout the manifesto.

Jess: And that brings us to today's headline from the o16g news aggregator, which is Governance Moves from Promises to Runtime Proof.

Cory: Yeah. So it was sort of smile inducing that we all knew this call was coming today. And I looked up what the agents had produced and literally it's a headline. And then you go in to read it and it is a, basically a rant in favor of observability and why observability is just more important in an agentic error than ever before.

And we were chatting before we started recording. But how all this works is after I had written the manifesto, and so then on this flight home, had Claude do this initial version, and I had seeded it with a few RSS feeds of some of the obvious AI authors and thinkers. And then every time it finds an article that it scores highly, it then goes looking to see if that author has a blog or RSS feed or an Atom feed.

It just adds it into the corpus. And so it's just been spidering its way out through AI authors. And then throughout the day it's writing sort of mini versions of the brief and ranking articles and scoring them. And then it combines them into something that probably will eventually be a newsletter, but right now I just read it on the web in the morning and it has been, because there's so much happening in AI, it's just impossible to try to keep up with everything.

And what it does is it uses the outcome engineering framework as a way to sort of organize and hang articles together. And in fact, if you go into the manifesto now, each of the 16 points now links out to the hundreds and hundreds of articles that it has found that read against those ideas. And it has been-- Obviously like, as we were talking about, the 16 principles are me being a little bit too cute, but at the same time--

So this will obviously change and it's obviously not right or perfect because, look, nobody's got a monopoly on correctness. But it has been a pretty interesting way to go read. And also because they broadly split 8 and 8 into sort of doing and governance, it also has made for some interesting statistics around, well, are articles more leaning toward doing? Are they more leaning toward governance? How does that track with optimism?

And if you scroll all the way down the front page, there are a bunch of sentiment and other stats being generated off of all the articles as well. Because once you're generating a bunch of articles and you have agents running in Cloudflare workflows, at some point you're once again bored on an airplane and you say, "hey, Claude, what else could we do here?"

And it has been, I think, kind of illuminating to watch. It turns out the people writing the doing posts are substantially more optimistic than the people writing the governance posts. Not surprising, but the degree to which the doers are more optimistic is like 60 points or something. So it's a pretty dramatic difference than the people thinking about how do you measure whether these things are working or not?

And particularly on days when, you know, we have stories like Copilot adding 1.5 million ads have been added by Copilot to people's commits, which I think is, one might say, an unexpected result, if you've been using Copilot. It does lean into how are we measuring, how are we discovering?

And certainly with what we do at Onebrief. Because we are building military decision making and planning software. So it's workflow software for the military. We think a lot about what happens when new versions of agents come out, when they get adopted, when they move on to secure networks. Verification and validation is the entire game.

Jess: Right.

Cory: Because on the one hand, yay, I am glad that DOD is saying we want to bring more frontier models in, but frontier models get updated every week right now, and more than that, they get updated on the back end and you don't even know that they're getting updated because clearly the frontier model operators, they are operating within power constraints and usage constraints and they're all trying to run businesses.

So they're making decisions about how their models are deployed and how they're being used. And if you use the agentic coding tools every day, it's pretty noticeable when they're having an off day and it doesn't always correspond to an outage.

You know, it's kind of unsurprising. I think it was Microsoft who just launched the "why don't you have all the models ask questions of your thing and then we'll come back with sort of some sort of voted combination."

But coding is the same way where I think it would be pretty foolish to bet your code on only one model at any one time. Also because even when you prime models with pretty strong roles, frontier models from one manufacturer tend to converge on agreement. And you get a little less agreement if you throw different model makers at the problem.

Jess: That makes sense.

Cory: And you see some of that in the manifesto, I talked about that. And so two months later it's kind of exciting to see, nope, there are actual products being produced at Hyperscalers that are realizing that these are some of the ideas you need to run with because--

Look, we just all want this capability. We want more ability to create the things that we think would be important to be created and to know that they're actually working. Because if you don't know they're having the outcomes, they don't really know anything.

Jess: Right. So observability is crucial to "did we succeed?" And also easier than ever to add

Cory: One hundred percent.

And it's hard to imagine building almost anything at this point without at least a little bit of observability in there.

Because again especially when we're doing things that are complicated or multi service, it's so hard to know that your tests are really covering all of the cases as opposed to "no, no, no, what's really happening?"And oh by the way, if we can't be bothered to estimate in some way, shape or form what we think success looks like, well then we really are just vibing and that's okay.

There are times where I just want to see something. I can't even imagine how this UI might work. So let's just throw up placeholders and go play with it and move it around and actually see how it feels. And there's value in that.

I think one of the really big changes for software development is there used to just sort of be like it's something in production and that would have a certain bar attached to it and a certain amount of hopefully rigor, hopefully observability. But what happens when you just want to put a demo in front of a user?

Okay, does that need the full bar? Maybe, maybe not. Depends a little bit on your architecture.

Jess: Because success there is answering a question.

Cory: Success there is answering a question. Unless of course bad code there could expose private user data or take down the whole system or--

Jess: There are failures.

Corey: There are failures. So what's your criteria? What's the criteria for your-- Like are you working in medical systems? Well guess what, your borrower is going to be really different. If you're in heavily regulated industries, you're going to be operating in a different way.

Jess: If this is your own website, you can work on it on a plane. No big deal.

Cory: That's right because the, "Oh actually it turns out--"

So the blog itself is Astro and Astro is super super cranky about YAML front matter having any mistakes in it. It just full on panics. It is like "I don't know what to do. You've clearly decided to throw garbage at me and I'm just going to throw up my hands."

And so let's imagine you have, you're pulling stories and you're building cards. It's pretty easy for a model to make a mistake there. And so okay, and even with observability coverage and even with like o16g has gone down more than once. But so what?

Jess: Do you put a CI check in there for that?

Cory: So yes you got a CI check in there that was going to a filtered email address. So it was happily generating the report and then I had successfully filtered somewhere where I was never going to look at it. So win-win really from my perspective.

Jess: Haha. Generate the information and then ignore it. Haha.

Ken: No verifying this reality. Haha.

Jess: Yeah, which also, observability, if you don't look at it, if you're not going back and checking the results again, you're just spending network and bits and compute for nothing.

Cory: That's right.

Jess: We have a value at Honeycomb, "everything is an experiment" and I think that's nice but I add to it "everything is an experiment if you look at the results."

Cory: 100%. And also like an experiment means hypothesis, generating results, looking at the results. You know it is the P value hacking versions in product development of " let me just cherry pick the data and run around high fiving everyone." Like again we've all had-- Okay, you all haven't because you all are really good at this. But, like, let me assure you, I have done that. We have all done that at some point.

Jess: Half the point of statistics is to convince people of what is obviously right, but they don't know enough about it to believe you. Haha.

Cory: Yeah, I think that there is great wisdom in that statement. but like going back to your creativity piece, because what's been fun about writing this and putting the up is there's actually been a fair amount of feedback about it and a lot of side channel discussions.

And the creativity piece is one that I think people really are genuinely wrestling with. And to my mind, goes back to, look, nobody's saying you can't write code. You want to write code because writing code makes you happy. Fricking go write code.

Jess: It's puzzle solving. And puzzle solving is fun.

Cory: 100%. Understand that a competitor might be coming after you and they might be a lot faster than you are. And so choose your battles, choose where you want to go. But also, if you haven't tried to be creative in partnership with AI, much like being creative in partnership with other people, it can be a very rewarding experience. But it's not just, you know, "hey, OpenAI or Gemini, make me an image."Great. Done.

Jess: No, no. Step one, get the AI to write the thing. Step two, change everything.

Cory: That's right.

Jess: Which you did with your manifesto.

Cory: And I think we've again, we've all, whether it's code, whether it's documents, whether it's images, AI can help get you unstuck.

Jess: Oh, especially with CSS.

Ken: Oh, oh, yeah, yeah.

Cory: Or wherever your weak spot is. Command line bash is not my favorite thing. And it has been not my favorite thing since like 1992. And it has remained not my favorite thing. And boy, like, AI helps with that. Though, hilariously, you know, it will make mistakes there. And you want to really have a hilarious day, have it make mistakes in your shell scripts on your Mac.

You have this hilarious moment of like, oh, yeah, let me go fix that. And that's going to be a little bit more fun to go fix. But again, it gets you unstuck. It helps you move forward. It's sort of an interesting popularity contest of like, what technologies is it picking for any given solution? I find that one really interesting to look at because you don't always agree.

But the second piece of feedback that a lot of people gave me, which was one that I found actually kind of maddening, was-- One of the assertions in the manifesto is that the backlog goes away. Because if you don't have human time and attention as a limit, go build the things because--

Jess: You did say earlier that there was prioritization to do--

Cory: Oh no, a hundred percent. It means you're making decisions based on intention and priority, not based on, well, I've only got three engineers. Because if you have 100x the capacity, you might be thinking about priority really differently. And so because short punchy statements are fun. Right?

You make statements about the backlog being dead and that generated a lot of side channels that mostly were framed around, "but the backlog is how I keep my product good." You're like, "okay, tell me more."

And you eventually get to, it is this giant passive aggressive game of we stuff things into the backlog rather than saying no to things.

Ken: Haha.

Jess: Ooh.

Cory: Right? We haven't said no, we just haven't gotten to it yet.

Ken: We're appeasing for the moment and get to it eventually if we can.

Cory: That's right, if we can. And I think, look, that might work really well for some teams and some groups. That's fine. It just, that doesn't work for me. I think that direct conversations about things tend to get you to better outcomes and better places. And I don't want some team or person frustrated because what they think of as a very important idea is sitting on the backlog.

And so I'd much rather, if it's cheap enough to build and it's not dangerous because you're not going to leak user data and you're not going to take down your whole system-- Great, go build it.

Jess: If you can measure and find out which of you is wrong.

Cory: That's right. Or maybe you're both right. Right? Maybe it's neither of the two ideas. Putting things into production, it's a little bit like the military analogy, right?

No product survives first contact with the user and it is super important to have strong opinions that are weakly held.

And you might be 100% fricking sure that either your idea is glorious or this other idea is terrible. I'm pretty sure we've all been wrong about that. I mean it is the nice thing of having gotten started with arcade games because there's really nothing like pouring your heart and soul into some game mechanic that you are 100% certain is the greatest mechanic that has ever been invented anywhere, ever.

And then you put it in front of a bunch of caffeine and pizza fueled 14-year-olds and watch them ignore it. And that is a humbling experience because it turns out you can't actually go out and negotiate with them. And it also doesn't mean they can tell you what good is, which is the other trap to fall into.

And so what do you need to do? You need to go try something else and it might be surfacing it more. Or you might just be wrong. It might actually not be the best mechanic ever and maybe it's a terrible mechanic and you have to rethink the game. And products are no different. Technology is no different.

We delivered a piece of infrastructure to a team only to have the team not use it. And it's not because they hate you and it's not because they're bad. You were just wrong about some level of understanding of their need, how they worked, their technology, your technology, the interface, like all these things.

Jess: And if you can react to that with curiosity, then you can move forward.

Cory: Well after the seething frustration and anger. But yeah, sure.

Jess: Haha!

Cory: Like, curiosity is the seventh or eighth thing, right? And it is really, really exciting to be able to think about, "no, wait, it's actually kind of cheap to go try this other idea." So long as, you know, you have observability, you have an ability to state what you think it's going to do and then when it does something wildly different, thank goodness, capture that also. Right?

That's why we're actually talking about observability and not just metrics. Right? It is, you know that actually when you're like, "well, I think it's going to increase whatever signup flow or whatever," but what it actually generates is more retention in a weird way because people are coming in primed better.

Oh well that's cool. But I actually needed a longer window on engagement to detect that. You know, we think about things that I think start getting really curious, in a world where we're able to try a lot more things, it's really easy to answer a question that has some immediate short term time window on it. Right?

Like you're looking at just click through rate on a particular page or share rate or all the things that we watch products hyper optimize on. And frankly the whole reason why most of the mobile web is just this sustained cacophony of overlapping stuff because every one of those is some PM who is chasing a click through metric.

Jess: Yeah. And it's very easy to measure, "Oh yes, they clicked on this" and it's hard to measure, "They said screw this and close the website."

Cory: Yeah, a hundred percent. Yes, and they burned out on your website two months later because they could put up with it for, you know, a month. But at the end of that--

Jess: That's hard to attribute.

Cory: It's hard. And we've certainly seen. It's where we see attention reinforcement broadly be so successful because everybody's measuring--

Jess: What's attention reinforcement?

Cory: It's how every conventional feed gets ranked. So you look at what gets attention and you rank more of that and put more of that in front of you.

Jess: Oh, okay.

Cory: And so it's the core algorithm of, well, everything. And it is a very common way if you're going to do like a content feedback. And so you say, well, what is attention? Attention is comments and likes and clicks and take the things that are getting a bunch of those and jam more of them in.

And unfortunately it's really then easy to hijack. And so you hijack it with outrage and other things. And so part of what I love about agents is they give you very different ways to think about code and content and interactions. Because what if rather than just, and actually what o16g does, like there are no click signals, there is no data collected of any kind and instead it's agents being prompted with, "okay, so for a technical reader interested in AI, rank these 20 stories."

Jess: Okay, so there's an interpretive ranking instead of working on the limited signals that you can extract from users.

Cory: Correct. And it also means that you can do it without extracting potentially any data from users or no attributed data. Which is nice because now you don't have to actually hold on to attributed data.

Ken: You simply understand what your audience is made up of and get a good description of it.

Cory: Yeah, you can aggregate, like aggregated data is super useful and incredibly valuable, but you think about like things that are risky to hold onto. Particularly again if you're operating in highly regulated industries, it's super useful to not be able to get back to a human at times. But for a lot of conventional ranking tech, you effectively needed to be able to do that.

Again, AI just gives you these different capabilities. So as you think about the joy of building products and technology with it, part of one other aspect of this that I find very exciting is the layers of complexity around making product or technology development better.

So succeeding at outcome engineering tends to look really similar to a lot of product complexity. And so the skills, the technology, the tools you use, observability, the algorithms you bring to it, the heuristics you bring to it that you apply from a product development perspective-- Are we working on the right thing?

Ken, you and I were chatting before this started about companies often ship their org charts. And so there's this fun question of like, well, what does that mean in an agentic space? Like, are the agents just sort of modeling your org? And I think to some degree it's even more complicated than that because I think your agents are in fact another org you have to be thinking about if you're really operating in a multi agentic environment, particularly with agents that have memory, agents that understand what is the mission of your company, what are your current goals?

Why are you shipping this product? Why wouldn't you want your agents to know that before they're writing your code? Because you want your human teams to know that. It's actually really scary if you wander around and ask a bunch of engineers, hey, so what's the company's mission? And they're like, "I don't know. But this algorithm's really interesting."

It's like they're still super smart and they're maybe doing something incredibly cool and important. But wouldn't it be better if they also knew? Nobody needs to know everything that's going on. There's madness in the, you know, complete transparency of everybody who needs to know everything like that, that doesn't work either.

But it's super helpful to know, like, what's the company's priorities right now? What are your priorities right now? What are your team's priorities right now? Those are, to my mind, pretty mandatory parts of effective companies and teams. Yet we blind agents to that. We don't even think about building tools and systems that would allow agents to know those things.

And the manifesto talks about this as actually being really important, where if you know where your company's going, you know where your goals are, you want your agents to know that also because that lets them choose technology with that context, lets them choose particular algorithmic choices with that context. And like the really obvious cases, again, if you're in a regulated industry, you really want the agent to know that.

Jess: Right. You want it to know not to retain individual user data.

Cory: Exactly. But that's no different than, I think we've all had a moment where some junior engineer is like, "hey, you know, I figured out I can log every keystroke and it would help me do xyz." And you're like, "yeah, but maybe we don't want to do that."

Ken: Right.

Cory: And where is that pull request so we can go find it and make sure it doesn't get any farther? You know, it's a super well meaning moment. And that's a lot of where agents are. Right?

Agents know the entire history of computer science. They know basically every piece of technology up to their training cutoff, but they don't have any what we would think of as wisdom.

And they don't have--

Jess: They don't know what's appropriate.

Cory: That's right. That's right. And having been a junior engineer, that feels very familiar. Right? And so how do you help somebody in that context? Well, you give them context, you give them guardrails and guidance. And so I think if you start from company mission and goals and that flows all the way through to maybe the far end, if we thought of this conceptually kind of as a continuum, observability is then all the way on the other end.

Jess: So that it loops back?

Cory: So it loops all the way back. Right? Can you look at this not just at the question of did this change help a user, but did this change actually contribute to what are our goals this month, this week, this quarter--

Jess: Or at least is it consistent with the company goals?

Cory: Yeah, no, I love that. Right. And where I think again things get super exciting: Is it consistent with company mission goals? That's a great question to ask an AI. That's something that would have been very, very hard to monitor pre agents.

That's a pretty trivial question for an agent to answer. But it can only answer it if it has access to your mission and goals on the one end, then it has access to the code it produced and then it has access to, well, what were the results of shipping this? Which is observability.

Jess: Yes.

Ken: So unfortunately we have to wrap it up here, but I would just make sure that anyone who's listening to this, go and look o16g.com, which is where your manifesto is and where else can we find you, Cory?

Cory: I'm also on Cory.News. C-O-R-Y.News is where I blog and I'm also pretty easy to find because I have an Internet unique name.

Ken: Awesome.

Jess: Oh this was wonderful.

Cory: Thank you both so much. This is so much fun.

Ken: This is great. Thank you so much.

Content from the Library

Third Loop Ep. #4, Signals and Levers with Elisabeth Hendrickson and Joel Tosi

On episode 4 of Third Loop, Elisabeth Hendrickson and Joel Tosi join the hosts to discuss systems thinking, software delivery,...

High Leverage Ep. #9, The AI Coding Paradigm Shift with Simon Willison

On episode 9 of High Leverage, Joe Ruscio sits down with Simon Willison to unpack the rapid evolution of AI coding tools and what...

Data Renegades Ep. #11, Contrarian Bets and AI Skepticism with Michael Stonebraker

On episode 11 of Data Renegades, CL Kao sits down with Michael Stonebraker, legendary database pioneer and creator of Ingres and...